The 2011 slides showed performance differences for this setting as well. it controls when a task woken by an event is able to immediately pre-empt the currently running process. Sched_wakeup_granularity_ns concerns "wake-up pre-emption". But I tried to look into this and it seems some more specific tuning might also be relevant, since you are tuning for throughput. It makes me want to keep things simple and multiply them all by the same factor :-). The default values of the three settings above look relatively close to each other. We can see this old-school tuning for 'server' was still very relevant in 2011, for throughput in some high-load benchmark tests: And perhaps a couple of other settings It sounds reasonable to try changing this value up to 10 ms (i.e. 100 Hz was also useful when running Linux on very slow CPUs, this was one of the reasons given when CONFIG_HZ was first added as an build setting on X86. Other supported values of CONFIG_HZ were 250 Hz (the default), and 100 Hz for the "server" end. In the first version of the CFS code, the default value was 1 ms, equivalent to 1000 Hz for "desktop" usage. I think of this CFS setting as mimicking the previous build-time setting, CONFIG_HZ. In other words, we can change this setting to reduce overheads from context-switching, and therefore improve throughput at the cost of responsiveness ("latency"). In the original sched-design-CFS.txt this was described as the only "tunable" setting, "to tune the scheduler from 'desktop' (low latencies) to 'server' (good batching) workloads."

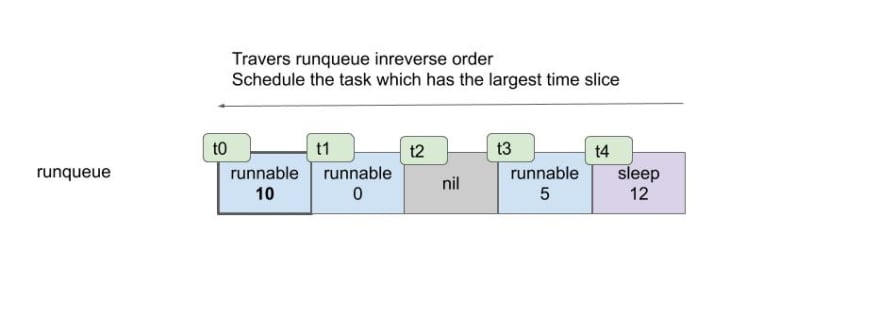

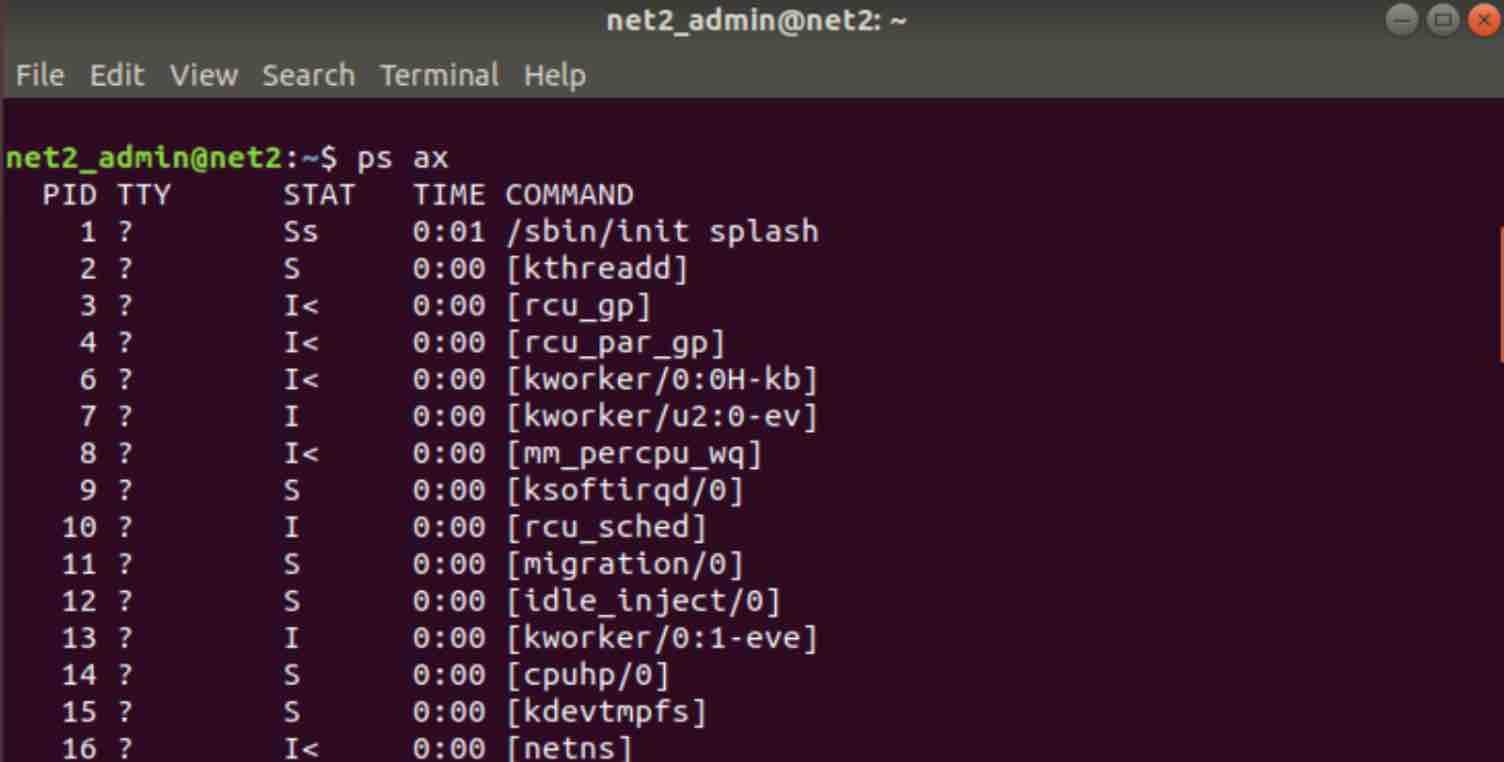

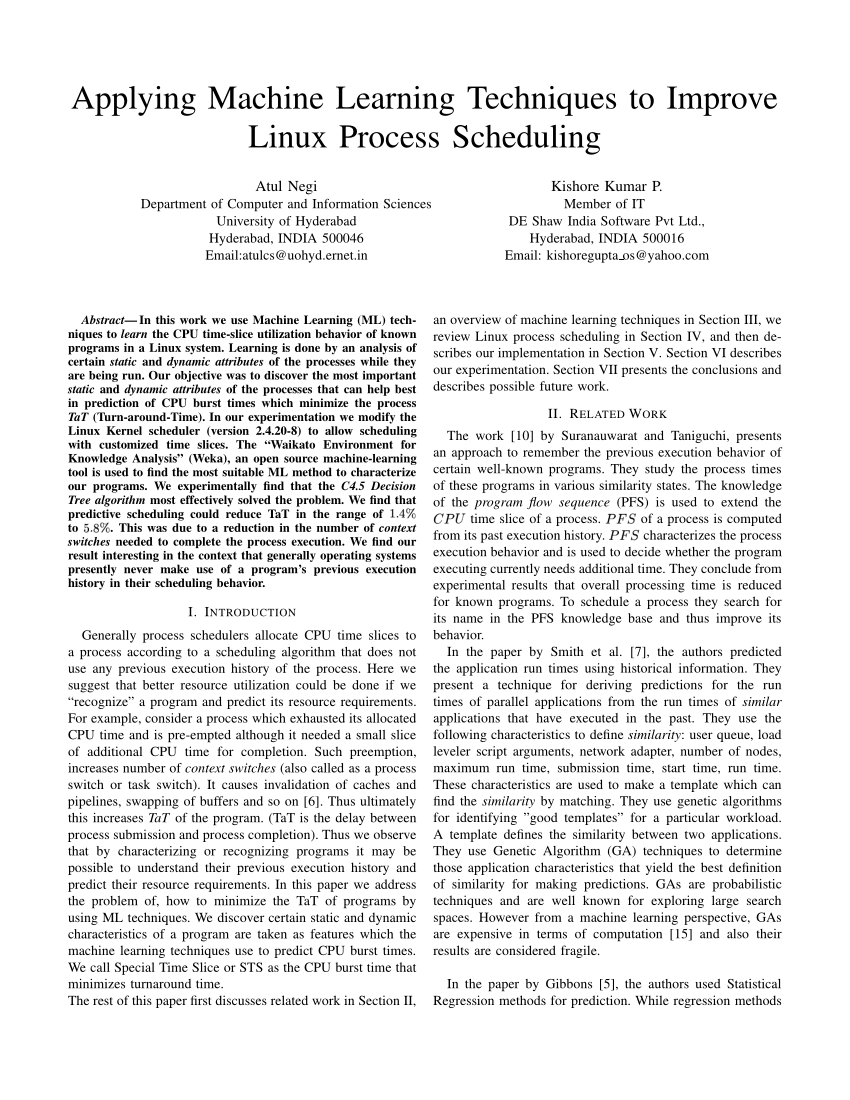

Sched_min_granularity_ns is the most prominent setting. To learn how to apply this type of setting, look up "sysctl" or read the short introduction here. You can set sysctl's temporarily until the next reboot, or permanently in a configuration file which is applied on each boot. CFS can be tuned using a few sysctl settings. On current Linux kernels, CPU time slices are allocated to tasks by CFS, the Completely Fair Scheduler. We can try to understand this suggestion in more detail. Technically this link says 10 μs, which would be 1000 times smaller. If tuning the time-slice is still relevant, is there is a new method which does not lock it down at build-time?įor most RHEL7 servers, RedHat suggest increasing sched_min_granularity_ns to 10ms and sched_wakeup_granularity_ns to 15ms. For Linux distributions, it is much more practical if they can have a single kernel per CPU architecture, and allow configuring it at runtime or at least at boot-time. Is the CPU scheduler time-slice still based on CONFIG_HZ?Īlso, in practice build-time tuning is very limiting. So I want to know what the current situation is.

However I vaguely remember this being a thing, with the CONFIG_HZ build-time option. I do not personally want to change the timeslice. I am posting this based on my initial reaction to the question How to change Linux context-switch frequency? If you have two processes which use all the CPU time they can get, then switching less frequently can allow them to get more work done in the same time. If you have one process which uses all the CPU time it can get, and another process which interacts with the user, then switching more frequently can reduce delayed responses. However switching between processes has a cost, therefore there is a tradeoff. This feature is generally good, because it stops an individual process hogging the CPU and making the system completely non-responsive. This is the kernel feature described as "pre-emptive multi-tasking". This question asks how to reduce how frequently the kernel will force a switch between different processes running on the same CPU. Is it possible to increase the length of time-slices, which the Linux CPU scheduler allows a process to run for? How could I do this? Background knowledge

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed